Table of Contents

Objective:

To learn about generative adversarial models.

Deliverable:

For this lab, you will need to implement a generative adversarial network (GAN). Specifically, we will be using the technique outlined in the paper Improved Training of Wasserstein GANs.

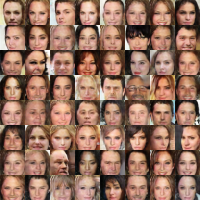

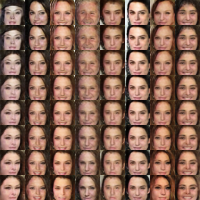

You should turn in an iPython notebook that shows two plots. The first plot should be random samples from the final generator. The second should show interpolation between two faces by interpolating in z space.

You must also turn in your code, but your code does not need to be in a notebook if it's easier to turn it in separately (but please zip your code and notebook together in a single zip file).

NOTE: this lab is complex. Please read through the entire spec before diving in.

Also, note that training on this dataset will likely take some time. Please make sure you start early enough to run the training long enough!

Grading standards:

Your code/image will be graded on the following:

- 20% Correct implementation of discriminator

- 20% Correct implementation of generator

- 50% Correct implementation of training algorithm

- 10% Tidy and legible final image

Dataset:

The dataset you will be using is the "celebA" dataset, a set of 202,599 face images of celebrities. Each image is 178×218. You should download the “aligned and cropped” version of the dataset. Here is a direct download link (1.4G), and here is additional information about the dataset.

Description:

This lab will help you develop several new pytorch skills, as well as understand some best practices needed for building large models. In addition, we'll be able to create networks that generate neat images!

Part 0: Implement a generator network

One of the advantages of the “Improved WGAN Training” algorithm is that many different kinds of topologies can be used. For this lab, I recommend one of three options:

- The DCGAN architecture, see Fig. 1.

- A ResNet.

- Our reference implementation used 5 layers:

- A fully connected layer

- 4 convolution transposed layers, followed by a batch norm layer and relu (except for the final layer)

- Followed by a sigmoid (The true image is between 0 to 1 and you want your gen img to be between 0 and 1 too)

Part 1: Implement a discriminator network

Again, you are encouraged to use either a DCGAN-like architecture, or a ResNet.

Our reference implementation used 4 convolution layers, each followed by a batch norm layer and leaky relu (leak 0.2) No batch norm on the first layer.

Note that the discriminator simply outputs a single scalar value. This value should unconstrained (ie, can be positive or negative), so you should not use a relu/sigmoid on the output of your network.

Part 2: Implement the Improved Wasserstein GAN training algorithm

The implementation of the improved Wasserstein GAN training algorithm (hereafter called “WGAN-GP”) is fairly straightforward, but involves a few new details:

- Gradient norm penalty. First of all, you must compute the gradient of the output of the discriminator with respect to x-hat. To do this, you should use the

autograd.gradfunction. - Reuse of variables. Remember that because the discriminator is being called multiple times, you must ensure that you do not create new copies of the variables. Use

requires_grad = Truefor the parameters of the discriminator. An easier way to do this would be to iterate through the discriminator model parameters and setparam.requires_grad = True - Trainable variables. In the algorithm, two different Adam optimizers are created, one for the generator, and one for the discriminator. You must make sure that each optimizer is only training the proper subset of variables!

#initialize your generator and discriminator models #initialize separate optimizer for both gen and disc #initialize your dataset and dataloader for e in epochs: for true_img in trainloader: #train discriminator# #because you want to be able to backprop through the params in discriminator for p in disc_model.parameters(): p.requires_grad = True for p in gen_model.parameters(): p.requires_grad = False for n in range(critic_iters): disc_optim.zero_grad() # generate noise tensor z # calculate disc loss: you will need autograd.grad # call dloss.backward() and disc_optim.step() #train generator# for p in disc_model.parameters(): p.requires_grad = False for p in gen_model.parameters(): p.requires_grad = True gen_optim.zero_grad() # generate noise tensor z # calculate loss for gen # call gloss.backward() and gen_optim.step()

Part 3: Generating the final face images

Your final deliverable is two images. The first should be a set of randomly generated faces. This is as simple as generating random z variables, and then running them through your generator.

For the second image, you must pick two random z values, then linearly interpolate between them (using about 8-10 steps). Plot the face corresponding to each interpolated z value.

See the beginning of this lab spec for examples of both images.

Hints and implementation notes:

We have recently tried turning off the batchnorms in both the generator and discriminator, and have gotten good results – you may want to start without them, and only add them if you need them. Plus, it's faster without the batchnorms.

The reference implementation was trained for 8 hours on a GTX 1070. It ran for 25 epochs (ie, scan through all 200,000 images), with batches of size 64 (3125 batches / epoch).

However, we were able to get reasonable (if blurry) faces after training for 2-3 hours.

I didn't try to optimize the hyperparameters; these are the values that I used:

beta1 = 0.5 # 0 beta2 = 0.999 # 0.9 lambda = 10 ncritic = 1 # 5 learning_rate = 0.0002 # 0.0001 batch_size = 200 batch_norm_decay=0.9 batch_norm_epsilon=1e-5

Changing to number of critic steps from 5 to 1 didn't seem to matter; changing the alpha parameters to 0.0001 didn't seem to matter; but changing beta1 and beta2 to the values suggested in the paper (0.0 and 0.9, respectively) seemed to make things a lot worse. Different set of numbers might works well for different people. So play around with the numbers that work well for you.

This code should be helpful to get the data:

!wget --load-cookies cookies.txt 'https://docs.google.com/uc?export=download&confirm='"$(wget --save-cookies cookies.txt --keep-session-cookies --no-check-certificate 'https://docs.google.com/uc?export=download&id=0B7EVK8r0v71pZjFTYXZWM3FlRnM' -O- | sed -rn 's/.*confirm=([0-9A-Za-z_]+).*/\1\n/p')"'&id=0B7EVK8r0v71pZjFTYXZWM3FlRnM' -O img_align_celeba.zip !unzip -q img_align_celeba !mkdir test !mv img_align_celeba test

And using the data in a dataset class:

class CelebaDataset(Dataset): def __init__(self, root, size=128, train=True): super(CelebaDataset, self).__init__() self.dataset_folder = torchvision.datasets.ImageFolder(os.path.join(root) ,transform = transforms.Compose([transforms.Resize((size,size)),transforms.ToTensor()])) def __getitem__(self,index): img = self.dataset_folder[index] return img[0] def __len__(self): return len(self.dataset_folder)