This is an old revision of the document!

Table of Contents

Objective:

To learn about deconvolutions, variable sharing, trainable variables, and generative adversarial models.

Deliverable:

For this lab, you will need to implement a generative adversarial network (GAN). Specifically, we will be using the technique outlined in the paper Improved Training of Wasserstein GANs.

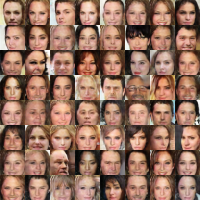

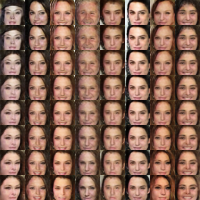

You should turn in an iPython notebook that shows a two plots. The first plot should be random samples from the final generator. The second should show interpolation between two faces by interpolating in z space.

You must also turn in your code, but your code does not need to be in a notebook, if it's easier to turn it in separately (but please zip your code and notebook together in a single zip file).

NOTE: this lab is complex. Please read through the entire spec before diving in.

Grading standards:

Your code/image will be graded on the following:

- 20% Correct implementation of discriminator

- 20% Correct implementation of generator

- 50% Correct implementation of training algorithm

- 10% Tidy and legible final image

Dataset:

The dataset you will be using is the "celebA" dataset, a set of 202,599 face images of celebrities. Each image is 178×218. You should download the “aligned and cropped” version of the dataset. Here is a direct download link (1.4G), and here is additional information about the dataset.

Description:

This lab will help you develop several new tensorflow skills, as well as understand some best practices needed for building large models. In addition, we'll be able to create networks that generate neat images!

Part 0: Implement a generator network

One of the advantages of the “Improved WGAN Training” algorithm is that many different kinds of topologies can be used. For this lab, I recommend one of three options:

- The DCGAN architecture, see Fig. 1.

- A ResNet.

Our reference implementation used 5 layers:

- A fully connected layer

- 4 convolution transposed layers, followed by a relu and batch norm layers (except for the final layer)

- A final tanH nonlinearity

Part 1: Implement a discriminator network

Again, you are encouraged to use either a DCGAN-like architecture, or a ResNet.

Our reference implementation used 4 convolution layers, each followed by a leaky relu (leak 0.2) and batch norm layer, with a sigmoid as the final nonlinearity.

Part 2: Implement the Improved Wasserstein GAN training algorithm

Gradients:

tf.gradients

Reuse of variables

scope.reuse_variables

Trainable variables

Two Adam optimizers

Part 3: Generating the final face images

Your final deliverable is two images. The first should be a set of randomly generated faces. This is as simple as generating random z variables, and then running them through your discriminator.

For the second image, you must pick two random z values, then linearly interpolate between them (using about 8-10 steps). Plot the face corresponding to each interpolated z value.

See the beginning of this lab spec for examples of both images.

Hints and implementation notes:

The reference implementation was trained for 8 hours on a GTX 1070. It ran for 25 epochs (ie, scan through all 200,000 images), with batches of size 64 (3125 batches / epoch).